Jan 27, 2023

Chat GPT raises the bar on mansplaining

AIs are great for navigating complexity and can help you mine huge amounts of data but they don’t replace human decision makers. Today, though, I’m here to warn you that the AI Chat GPT might be here to replace you in another role: that of the mansplainer. It turns out that it is a convincing know-it-all, willing to explain any topic, even those it knows nothing about, with false authority.

This, if you examine it, isn’t terribly surprising.

AIs are largely created by men, since only about 26 percent of the people working in AI are women. Gender bias – a result of this lack of diversity – in AIs and machine learning has already caused significant problems. Humans choose the data AIs use to learn and how its algorithms will be applied. They, essentially, create the AI’s worldview. Also, Chat GPT is supposed to have all the answers. So, it’s no surprise, really, that a penchant for mansplaining would become part of its DNA.

“And now we're going to do the most human thing of all: attempt something futile with a ton of unearned confidence and fail spectacularly!”

Michael, the afterlife architect from the from the TV series The Good Place, explains it well from the AI’s point of view: “All I've ever really wanted was to know what it feels like to be human,” he says. “And now we're going to do the most human thing of all: attempt something futile with a ton of unearned confidence and fail spectacularly!”

Spectacular unearned confidence at lightning speed

As someone who has some skill as a mansplainer – though I have learned through hard experience to deliver my answers with a large dose of humility – it isn’t the fact that Chat GPT is prone to mansplaining that’s most worrying. We expect an AI to have answers and to deliver them with confidence. The problem is that it uses the same confidence to deliver an explanation even when it doesn’t know the answer. This, too, is true of most mansplainers. But Chat GPT is so impressively skilled at this that the rest of us stand no chance.

This became clear to me when my son and his partner, who are both getting their PhDs in artificial intelligence, decided, for fun, to ask Chat GPT a difficult question in an area they are both focused on.

It did well! It came up with a quick answer, complete with links and references to academic research to support it.

The answer was detailed and convincing. And if my son and his girlfriend weren’t so versed in the topic, they might have believed every word.

Unable to admit ignorance, it lied

But it was all a lie. The answer was made up. The research papers were fake. Chat GPT was doing what every mansplainer does: explaining a topic they know nothing about with the confidence of an expert. Rebecca Solnit, author of Men Explain Things to Me, the essay credited as being the origin of the term mansplaining, calls this the “confrontational confidence of the totally ignorant.” Chat GPT did not have the honest humility to say, “I don’t know.” Instead it went all in on a lie. And the speed and thoroughness with which it created not only a plausible answer – what mansplainer can’t do that? – but also to create fake source material to support the lie is frightening. Not only do answer-seekers need to diligently fact-check Chat GPT’s answers. But mansplainers now face an adversary no human can compete with.

It never backed down. It never flinched. It made up the studies, the publications, and articles it linked to. Even the most confident mansplainer, however ignorant of the subject he is explaining with authority, can’t compete with that level of confrontational confidence.

I might write this off, to keep fear of this monster at bay, if it were an isolated occurrence. But it’s not.

Warning: Resistance is futile

NPR recently reported a similar incident involving a data scientist with a PhD in physics who decided to take Chat GPT for a spin. She made up a physical phenomenon she knew did not exist and asked Chat GPT to explain it.

Unable to admit it didn’t know the answer, it lied.

Again, Chat GPT produced an answer so specific and plausible – and backed it with citations – that she was nearly convinced that the phenomenon she’d made up was real. When she dug deeper, though, she discovered that not only was the answer a lie but so were the citations. It had attached the names of well-known physics experts to papers and publications that did not exist, to support its fictional answer.

So, even though I believe that we need AIs as allies to help us navigate complex, multidimensional data and massive datasets and that they are not here to replace project managers, decision makers, or most jobs - I do feel the need to issue a warning to mansplainers: If you can’t create fake citations, published studies, and academic journals that don’t exist in seconds on the fly, you are about to be replaced by an AI. And if that isn’t enough to shake your unearned confidence, nothing will.

---

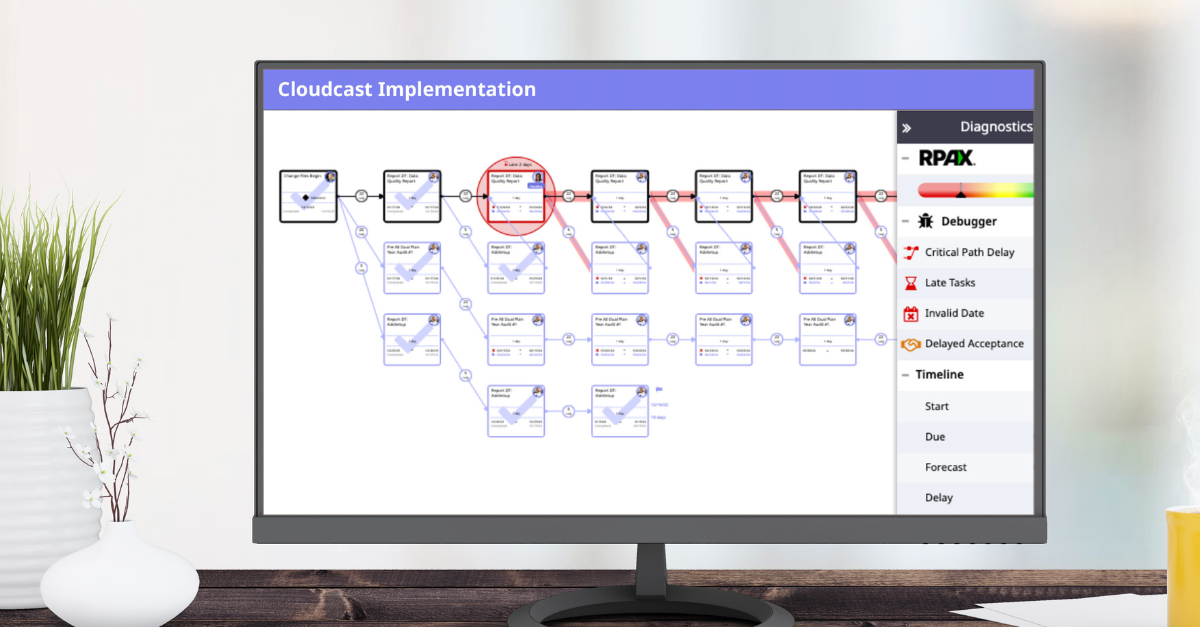

Mike Psenka is the CEO and founder of Moovila, the leading AI work management platform that uses automation and a discreet math engine to give organizations the real-time answers needed to ensure success.

Artificial Intelligence